2022

Wirzberger, M., Lado, A., Prentice, M., Oreshnikov, I., Passy, J., Stock, A., Lieder, F.

Can we improve self-regulation during computer-based work with optimal feedback?

Behaviour & Information Technology, November 2022 (article) Submitted

He, R., Lieder, F.

Learning-induced changes in people’s planning strategies

November 2022 (article) Submitted

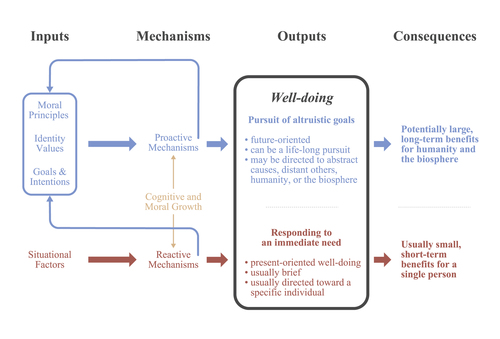

Lieder, F., Prentice, M., Corwin-Renner, E.

An interdisciplinary synthesis of research on understanding and promoting well-doing

Social and Personality Psychology Compass, 16(9), September 2022 (article)

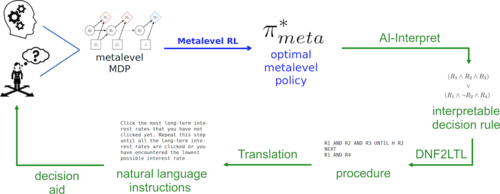

Becker, F., Skirzyński, J., van Opheusden, B., Lieder, F.

Boosting human decision-making with AI-generated decision aids

Computational Brain & Behavior, 5(4):467-490, July 2022 (article)

Mehta, A., Jain, Y. R., Kemtur, A., Stojcheski, J., Consul, S., Tosic, M., Lieder, F.

Leveraging machine learning to automatically derive robust decision strategies from imperfect models of the real world

Computational Brain & Behavior, 5, pages: 343-377, Springer Nature, June 2022 (article)

Consul, S., Heindrich, L., Stojcheski, J., Lieder, F.

Improving Human Decision-Making by Discovering Efficient Strategies for Hierarchical Planning

Computational Brain & Behavior, 5, pages: 185-216, Springer, 2022 (article)

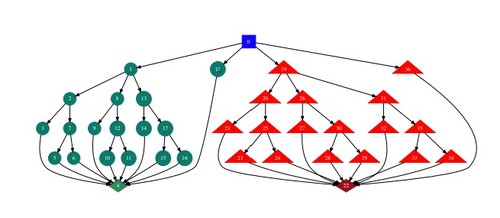

Callaway, F., Opheusden, B. V., Gul, S., Das, P., Krueger, P. M., Griffiths, T. L., Lieder, F.

Rational use of cognitive resources in human planning

Nature Human Behaviour, 6, pages: 1112-1125, April 2022 (article)

Pauly, R., Heindrich, L., Amo, V., Lieder, F.

What to learn next? Aligning gamification rewards to long-term goals using reinforcement learning

March 2022 (article) Accepted

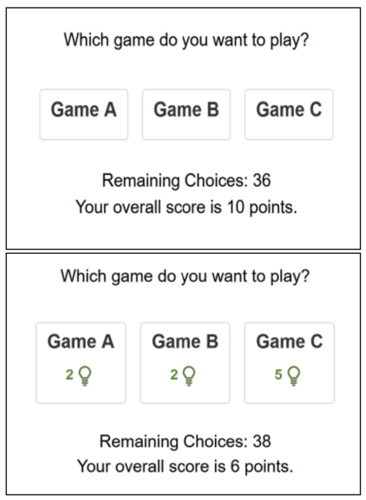

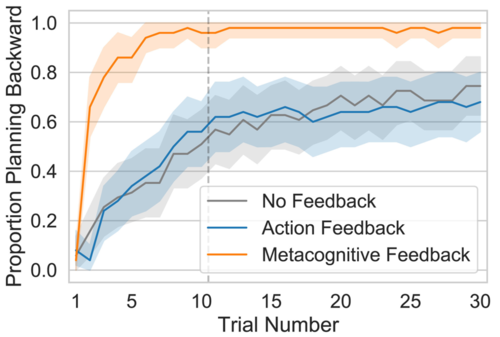

Callaway, F., Jain, Y. R., Opheusden, B. V., Das, P., Iwama, G., Gul, S., Krueger, P. M., Becker, F., Griffiths, T. L., Lieder, F.

Leveraging artificial intelligence to improve people’s planning strategies

119(12), PNAS, March 2022 (article)

Krueger, P., Callaway, F., Gul, S., Griffiths, T., Lieder, F.

Discovering Rational Heuristics for Risky Choice

PsyArXiv Preprints, January 2022 (article) Submitted